Welcome to the {Tech: Europe} Paris AI Hackathon! We brought voice AI APIs and Lego boxes. You bring the ideas. The SLNG team is here all weekend. Come say hi, grab a sticker, ask us anything.Documentation Index

Fetch the complete documentation index at: https://docs.slng.ai/llms.txt

Use this file to discover all available pages before exploring further.

What is SLNG?

SLNG is one API for real-time speech. Text-to-Speech, Speech-to-Text, and Voice Agents, all under 200ms. We aggregate providers like Deepgram, Rime, Cartesia, and Kugel behind a single endpoint. You switch models by changing the URL, not your code.Text-to-Speech

Text in, audio out. Multiple voices and languages.

Speech-to-Text

Transcribe audio files or live streams.

Voice Agents

Full voice agents that make and receive phone calls.

Get your API key

Create an account

Sign up at app.slng.ai. It takes less than a minute.

Generate an API key

Go to the API Keys page in your dashboard and create a new key. Copy it somewhere safe — you won’t be able to see it again.

Start building

Grab the code for whatever you need.- Text-to-Speech

- Speech-to-Text

- Voice Agents

- Unified API

Send text, get audio back.Pick a different voice by adding

"voice": "aura-2-theia-en" to the request body. See all available voices.Other languages

Switch the model in the URL — the rest of the code is identical. Spanish via Rime Arcana v3:- Spanish, Hindi, English (HTTP):

slng/rime/arcana:3-es,slng/rime/arcana:3-hi,slng/rime/arcana:3-en - French (WebSocket only): Cartesia Sonic 3 or Kugel

Try it live

Not ready to write code yet? Try the demos in your browser — drop in your API key and go.Live TTS demo

Type something and hear it back. Try different models and voices.

Live STT demo

Speak into your mic and watch the transcription stream in.

What we want you to build

Use voice and speech AI in whatever way gets you excited. Here are some starting points, but go wherever the idea takes you.Voice assistants

An agent that books appointments, handles support, tutors students. Pick a task and nail it.

Accessibility tools

Speech interfaces, screen readers, audio descriptions. Make something more people can use.

Creative audio apps

Podcasts, storytelling, audio games, voice-driven art. Surprise us.

Real-time transcription

Live captioning, meeting notes, multilingual translation. Turn speech into something useful.

Prizes

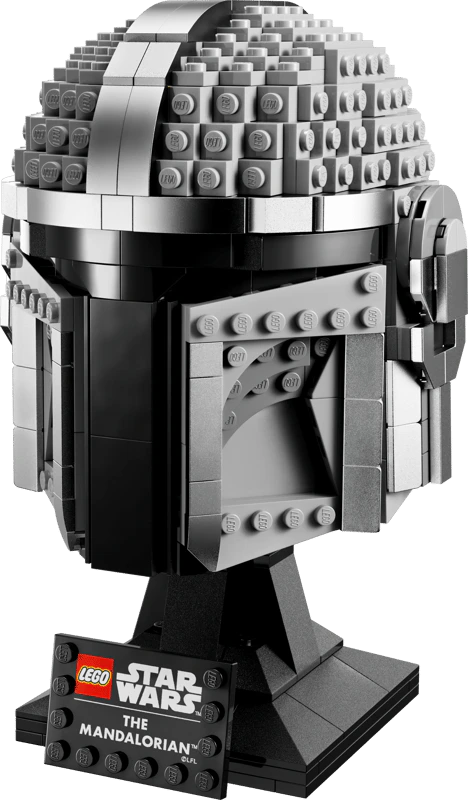

Best overall project wins a Lego Star Wars set. Demo what you built, impress the judges, take home some bricks.

FAQ

How much API credit do I get?

How much API credit do I get?

€4 in free credits when you sign up. That covers roughly 80 hours of audio generation or transcription, so you won’t run out this weekend.

What if I run out of credits?

What if I run out of credits?

Come find us at the event. We’ll top you up.

Do my credits expire?

Do my credits expire?

Nope. Keep building after the hackathon, polish your project, start a new one. They’re yours.

Which models should I use?

Which models should I use?

For most projects, start with:

- English TTS:

slng/deepgram/aura:2-en— low latency - English STT:

slng/deepgram/nova:3-en— high accuracy - Spanish or Hindi TTS:

slng/rime/arcana:3-es,slng/rime/arcana:3-hi - French TTS (WebSocket only): Cartesia Sonic 3 or Kugel

- Multilingual STT:

slng/deepgram/nova:3-multi— auto-detects 10+ languages

Can I use SLNG with my favorite framework?

Can I use SLNG with my favorite framework?

Yes. It’s standard HTTP and WebSocket, so it works with anything. The Unified API follows the same URL pattern for every provider, so you can drop SLNG into an existing voice pipeline without rewriting your integration.

Do I need a paid account?

Do I need a paid account?

No. Sign up at app.slng.ai and you’re good to go. The free tier covers the whole hackathon.

Where can I get help?

Where can I get help?

Find us at the event, we’re around all weekend. You can also check the full docs.